The history of Computer System Architecture has created some highly durable abstractions and operational specifications (programming interfaces and communication protocols). We have spent decades enhancing and embellishing them. These abstractions may seem like a city’s skyline built on solid ground. But this foundation floats atop a sea of fire, and so its stability is inevitably punctuated by cataclysmic change.

- Monolithic mainframe and departmental multitasking incumbents including IBM System/360, DEC TOPS20, Sperry-Rand’s Univac were pushed out by the Unix (later POSIX) primitive-based operating system kernel.

- Data networking based on circuit switching descended from the Bell Telephone System was eclipsed by the Internet’s datagram network architecture.

- Complex and microcoded processor architecture was revolutionized by Reduced Instruction Set Computing (RISC).

- Monolithic storage subsystems were made obsolete by Redundant Array of Inexpensive Disks (RAID).

During such events, solid abstractions cease to offer stable support. The familiar infrastructure on which applications are built becomes fluid. The complex systems, products and organizations that rely on them may bend and sway, but many will break and collapse. The evolution of Computer System Architecture is a story of the “creative destruction” of a series of strong abstractions, in each case exposing new ones that are more flexible and general. We are overdue for a shock that can loosen the grip of the abstractions that form the bedrock of our current Information and Communication Technology (ICT) environment, namely POSIX and the Internet.

Virtualization of early electronic devices led to the creation of abstractions that had attributes not found in any actually existing physical system. An important example is the multitasking operating system, which enabled the on-demand creation of processes which simulate entire von Neumann computers. The OS also facilitated the flexible use of virtual memory to modify the address space of an executing process. The role of the OS also expanded to include flexible file management using block-addressable storage, and metadata including file names and access attributes. Network access came even later.

Defining powerful abstractions is a useful aid in building complex applications. When an application community adopts a common interface as the means of accessing the resources they require, that community obtains the benefits of application interoperability and implementation independence.

- Application interoperability means that applications built on the abstractions gain the benefits of using the common abstractions, which can include communication between applications and across networks

- Implementation independence means that different implementations of the common abstraction can be substituted without requiring changes to applications.

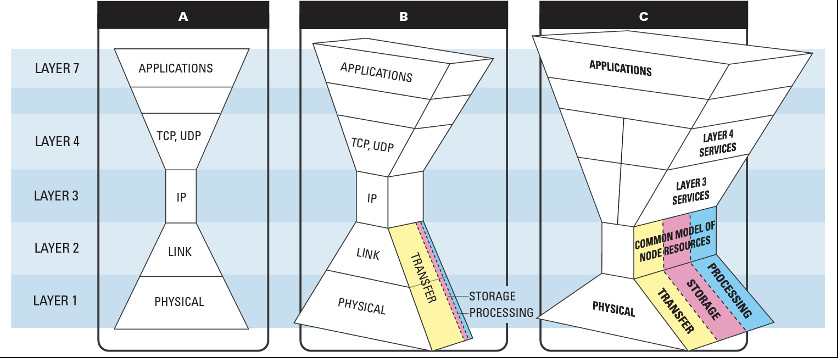

A fixed common layer can give rise to a large number of applications that are based on it and a great diversity of implementations. This idea is often expressed in a diagram that is iconic in system design, namely the hourglass model (Figure 1A).

Figure 1: Lowering the common service layer (identified as Layer 3 in the current Network stack) enables the implementation of much more general services that provide access to all categories of distributed resources: data transfer, storage and processing.

Credit: David Rogers, Interoperable Convergence of Storage, Networking and Computation, Future of Information and Communication Conference, 14-15 March 2019, San Francisco.

The history of Computer System Architecture shows us that the adoption of a common interface has been a powerful enabling strategy. It gives rise to a straightforward way of making progress in the complexity and diversity of applications and implementations. The common interface remains fixed but applications are progressively embellished with more features and capabilities. This also enables investment in diverse and powerful new implementations, knowing that they have a ready market of potential adopters.

Fixed common abstractions are so powerful that they induce the creation of highly specialized fields of training and inquiry (sometimes called “silos” or “stovepipes”). The community uses these silos as a bedrock for our thinking, our technologies, our markets and even our organizational structures. Examples of such bedrock abstractions are the current models of executing processes, mass storage systems and communication networks.

The other effect of adopting a common abstraction can be to constrain generality. Resources or capabilities that exist in the layers that support a common abstraction are not directly accessible to applications that are implemented using it. This gives rise to strategies that seek to escape any limitations of the common layer. These can include building new capabilities using the current standard (overlay implementation) or directly accessing low level capabilities without going through the standard (layering violation). These strategies can free the community from some of the constraints of old, unchanging mechanisms. But they cannot unlock the full potential of the fire of innovation that burns down below, in the technologies that have evolved to meet demands that were not accounted for in past designs. Examples of radical innovation at lower layers include Remote Direct Memory Access (RDMA) and Network Function Virtualization.

A natural response to encountering limitations in common standards is to update them. This can work well in software abstractions, where updates for the purposes of bug fixes and enhancement in functionality are part of the maintenance cycle. However in ICT infrastructure common abstractions are built into hardware or firmware that is rarely or perhaps never updated during the life of a device. Change to infrastructure can seem impossible. Part of the problem is the high level of individual and community investment in standards that seemed impervious to change (a phenomenon called “path dependence”). If vendors or regulators attempt to radically modify standard abstractions, the community may simply not accept the changes.

Given this state of affairs, how is it possible to make progress? The ICT community has invested its way deep into a technological cul-de-sac based on the POSIX and Internet standards. Historically, the answer has been to design a new common abstraction that can take advantage of new implementation strategies. Any candidate standard must support capabilities and features that can satisfy the unmet needs of a powerful application community.

To establish each of the current ICT abstractions, progress was made in the same way. Existing common abstractions, widely seen as unchangeable and having reached “the end of history,” were overthrown. The capabilities of lower layer technologies were exposed to build alternatives to those constraining standards. The design problem that occurred in each case was how to model the diversity that exists in the lower layers without sacrificing the design discipline imposed by the hourglass model. The answer has been to lower the level of the common abstraction to the layer that was previously below the old standard.

There is a common element that can be found in the design of these new abstractions, as well as many other successful standards. It is to seek a common abstraction that is logically weak while still being able to support the class of applications that is considered necessary. Logical weakness means generality which does not impose uniformly high levels of performance or control on the elements that are exposed through the standard interface. An example of this is “best effort” Internet service, which can have excellent performance, but may also be highly attenuated. The weakness of a specification creates the space for diversity within the standard.

A corollary to seeking weakness is that it tends to drive silos to converge. A process must have deterministic control; a file must have long-term stability; a wide area network must have unlimited reach. These are not necessarily properties of the underlying technologies (“reality is best effort”), and a more general standard will tend to expose the commonality in their low level implementation.

This leads to a proposal for how the current ICT impasse can be breached — by defining process execution, data management and wide area networking in terms of the common elements of their implementation (Figure 1c). These elements are the building blocks of all modern digital systems: persistent buffers (implemented using a variety of solid state, magnetic or physical media) and local area operations on sets of buffers (which include both computation carried out within a node and communication between LAN adjacent nodes). These abstractions are used to implement the current processing, storage and networking silos. But they can also be used to implement a wide variety of new digital services which do not fit into these pigeonholes. The name we give to this new layering scheme, which is a candidate for community adoption, is Exposed Buffer Architecture. It seeks to tap the fire down below.

Micah D. Beck (mbeck@utk.edu) is an associate professor at the Department of Electrical Engineering and Computer Science, University of Tennessee, Knoxville, TN, USA.