“I need a dashboard” says the stakeholder. Just get the data and dash it. Put it on the board. Up-lines should be trending up and the down-lines trending down. Add some graphics for sizzle. It should be a quick effort as some of the data is already available, so the stakeholder thinks. What could go wrong?

The Road To A Dashboard

The high-level steps to preparing a dashboard seem relatively straightforward:

- Get the data

- Prepare the data

- Clean

- Combine, when working with multiple sources

- Augment

- Analyze the data

This could all be implemented as one-off exercise in a single dashboarding tool—perhaps even on an analyst’s laptop, and possibly all before lunch depending on the size of the data. Quick-n-dirty reports serve an important role in the spectrum of analytic deliverables. However, as with potato chips, stakeholders sometimes have trouble stopping with just one dashboard. A portfolio of analytic deliverables raises a variety of questions regarding commonality and consistency of methods and analytic results. An effective dashboard makes complex analysis easy to understand in small digestible bites and necessarily hides layers of complexities. But the details matter . . . oh, how they matter. Sometimes it’s potato chip bowls of complexity on the backs of turtles all the way down. Let’s examine a few analytic use-cases.

Example – Clinical Measures Humans have been practicing medicine for thousands of years, yet the analysis of populations is a fairly recent discipline all things considered. Epidemiology only emerged in the 19th century with breakthroughs such as John Snow’s study of cholera, for example. Clinical measures are one of my favorite population analytic use-cases because they sound so simple at first—ratios of patient populations, or patient-events. Work on standardized clinical measures at the national level date at least back to the 1980’s from efforts from the Joint Commission and National Committee for Quality Assurance for clinical quality and efficacy studies, and no doubt inspired from earlier research efforts. Clinical measures were one of the core functionalities of Explorys, a healthcare informatics company I was at from 2009-2019.

Challenges with computing clinical measures start with the required data: it’s complex and, especially in the early days of Electronic Health Record deployments, was famously variable and un-standardized. Additionally, comprehensive analysis typically required multiple sources of data—combining clinical and billing data—which necessitated additional levels of data preparation for entity relationship and patient matching, another problem that is deceptively simple-sounding.

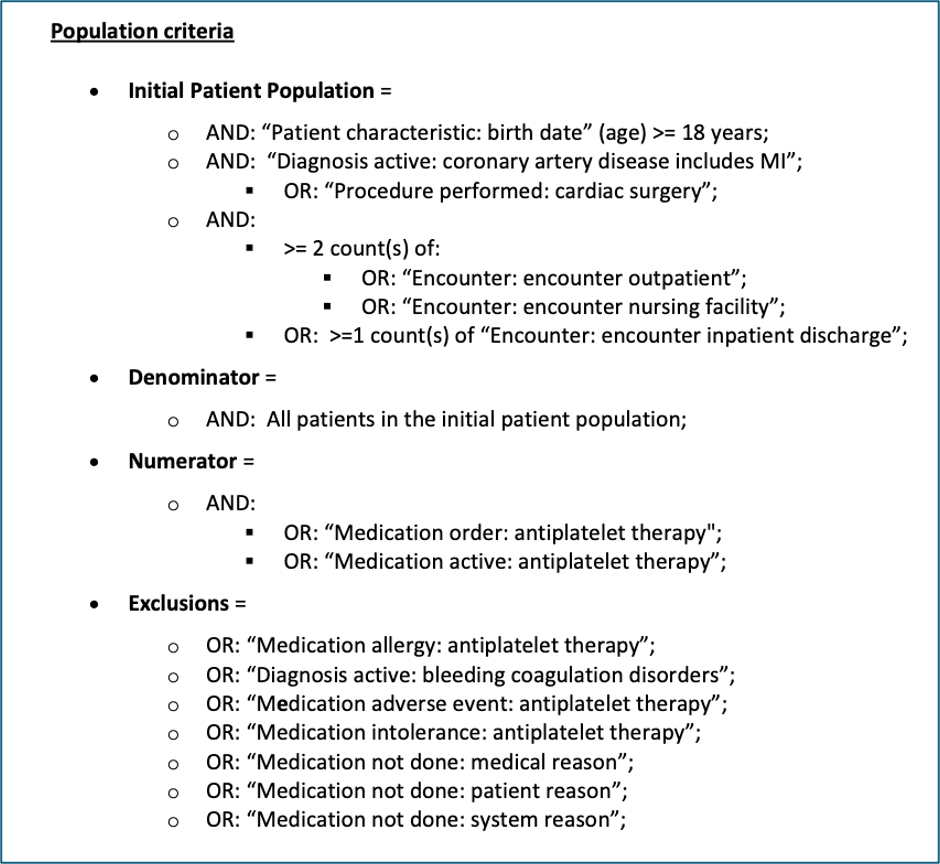

And then there are the measures themselves. Picked at random, this is NQF 0067 – Coronary Artery Disease (CAD): Oral Antiplatelet Therapy Prescribed for Patients with CAD:

To be specific, this is the human-readable version of the measure (the actual term for the format). It still doesn’t look that bad at first glance, but a few things become clear upon closer inspection. First, there is logic in the population criteria; not only Boolean logic with AND/ORs, but also event counts. Second, the criteria—as specific as they seem—still leave room for interpretation. “Medication active: antiplatelet therapy” is a class of medications which could, in turn, be represented in a number of ways. This is why clinical measure definitions are also often accompanied by substantial metadata describing what each of the concepts actually mean, right down to the code and codeset/terminology, to reduce ambiguity as much as possible.

There are many other levels of complexity, such as varying reporting periods (trailing 12 months vs. calendar), event sequencing (e.g., first occurrence of event A happens before first occurrence of event B), and provider attribution (which provider is responsible for any given measure). Plus, as with potato chips—nobody has just one—clinical measures are often undertaken with a program perspective and corresponding sets of calculations.

Today, clinical measures are often related to reimbursement and payment programs (CMS’s Quality Payment Program, for example). Money makes for a great motivator, and when money is at stake, it heightens the need for accuracy, understandability, and stability in analytic delivery. This not only requires an investment in dashboards that are easy to comprehend, but also in operational data and analytic pipelines that can scale and provide results every day.

Healthcare providers might only see a dashboard of measure results, but there is so much else that needs to happen to produce those calculations.

Example – CitiStat

CitiStat is a performance management program for local government first popularized in Baltimore in 2000 by Mayor Martin O’Malley, then adopted by many cities across the U.S. StateStat became a statewide performance management program in Maryland in 2007 after O’Malley became governor. Both were influenced by New York City’s CompStat program for Policing in the 1990’s. Similar to healthcare, local government and city-states have existed for millennia, but have been relatively late adopters of data management and analytics techniques.

As I described in the BLOG@CACM post “Operational and Analytic Data Cycles,” operational systems can be a rate-limiting factor in supporting analytics in many ways—such as outdated solutions that don’t capture required data, not supporting data integration, or inconsistent uptimes to name a few. An extreme version of this problem is where the operational system is paper—don’t laugh, we’re talking about local government. Any of those situations can produce a situation where “analytics” on a data source is an exercise of manually filling in a spreadsheet of things people believe to be true about a data source at a given point and time, and “analytics” become 100% manual with close to 0% reproducibility. Without effective operational systems, analytic efforts could be hobbled from the start, even before analytic data platforms come into play and present their own design challenges.

Tone and intention play an important role in process improvement and turning analytic deliverables into action. Leadership may say “get me the numbers, quick!” But what then? There is a difference between attempting to materially address a problem, or to find a scapegoat and/or just make it go away. The Wire was a 2000’s-era police drama set in Baltimore and featured a fictional “ComStat” program where police staff presented crime statistics and got yelled at by their superiors in a setting that was a combination internal press conference and tribunal. Granted, The Wire was a TV show, but I would hazard a guess that represented at least a few CitiStat experiences across the country. Hard problems require hard conversations, for sure, but also require collaboration to work through. This is important in any sector, but especially in the public sector.

Another factor is whether leadership actually wants the answers. Improving patient outcomes are a valid reason to calculate clinical measures, but is still a somewhat abstract one, though not to affected patients. Money, on the other hand, always gets leadership’s attention—if clinical measures are required for reimbursements, then they shall be calculated. Local, regional, and state governments should be interested in performance metrics, but interest tends to vary by administration. CitiStat was widely considered a success in Baltimore in its early years, as was StateStat in Maryland. But per a 2017 article in Governing, StateStat was significantly undone by O’Malley’s gubernatorial successor for reasons having little to do with the effectiveness of the program itself, as his successor was a longtime critic and member of a different political party. It started with a department rename, but as the article described, “In practice, it looked more like a gut job than a rebranding effort. [Governor] Hogan cut the office’s budget in half, reduced the staff from nine positions to four, and moved the headquarters from Annapolis to a small town 20 minutes outside the state capital.“ Performance management programs should be considered an asset at every level of government. Dashboards can represent insights into operations and opportunities for improvement. Unfortunately, such dashboards can also be viewed to be a source of pesky questions.

Conclusion

There are many technical details that comprise successful dashboards and analytic efforts in general, such as data integration, data management frameworks, data governance, analytic frameworks, cloud platforms, DevOps/SRE principles, etc. The references section below has a selection of BLOG@CACM posts I’ve written on these topics. But as important as technical topics are to enable analytic initiatives, top-down leadership support for such initiatives are even more important to sustain them. Without that, the necessary human and technological resources won’t follow, and then nothing happens.

Analytics are truly a full stack problem.

References

- BLOG@CACM on Data and Analytic Platforms

- The Importance of Effective Operational Systems for Successful Analytics

- Managing Critical Sections in Analytic Workloads

- Data and Analytic Platforms

- Clinical Measures

- Clinical Measures (Examples)

- CitiStat

- The Wire – “ComStat”

- CitiStat/StateStat Retraction (2017)

Doug Meil is a software architect in healthcare data management and analytics. He also founded the Cleveland Big Data Meetup in 2010. More of his BLOG@CACM posts can be found at https://www.linkedin.com/pulse/publications-doug-meil